Introduction

In the world of event-driven architecture, Apache Kafka is the undisputed heavyweight champion. But as any Tech Lead will tell you, the real challenge isn’t just using Kafka—it’s managing it. If you are still manually configuring clusters in the AWS Console (“Click-ops”), you are one accidental delete button away from a production disaster. Today, we’re going to look at how to provision AWS MSK (Managed Streaming for Apache Kafka) using Terraform, ensuring your infrastructure is versioned, repeatable, and secure.

1. Why Terraform for Kafka?

When you’re managing multiple environments (Dev, Staging, Prod), manual configuration is a liability. Using Terraform allows you to:

- Prevent Configuration Drift: Ensure all environments are identical.

- Auditability: Every change to your broker size or partition count is tracked in Git.

- Security: Automatically apply IAM policies and encryption settings without human error.

2. The Architecture

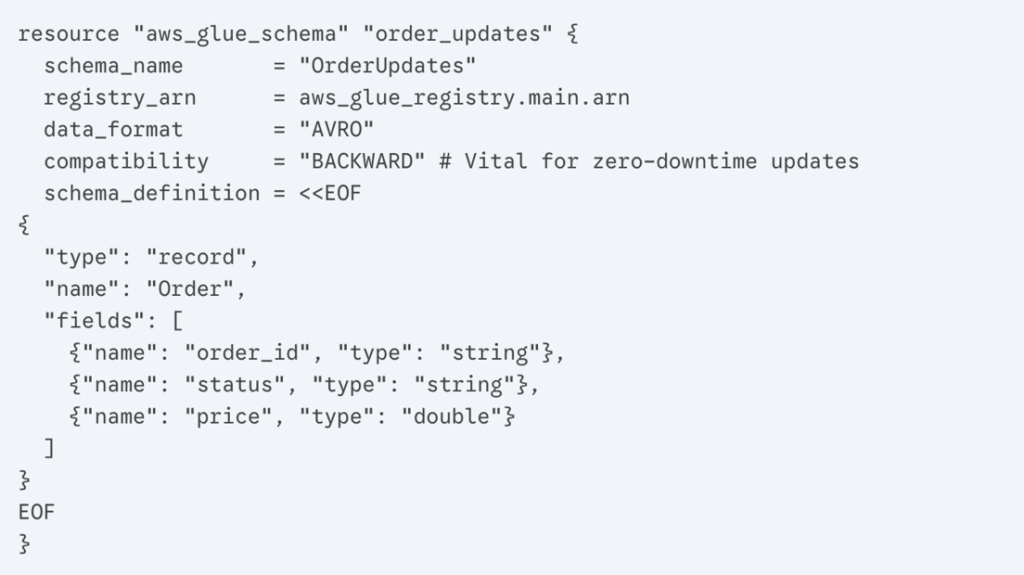

We are building a highly available MSK cluster spread across three Availability Zones (AZs) within a private VPC.

- VPC: Isolated network for security.

- Brokers: 3-node MSK cluster.

- Security: TLS encryption in transit and IAM-based authentication.

3. The Implementation

Step A: Provider Configuration

First, we define our AWS provider.

Step B: Defining the MSK Cluster

This is the core of our infrastructure. We focus on a kafka.m5.large instance type to balance cost and performance.

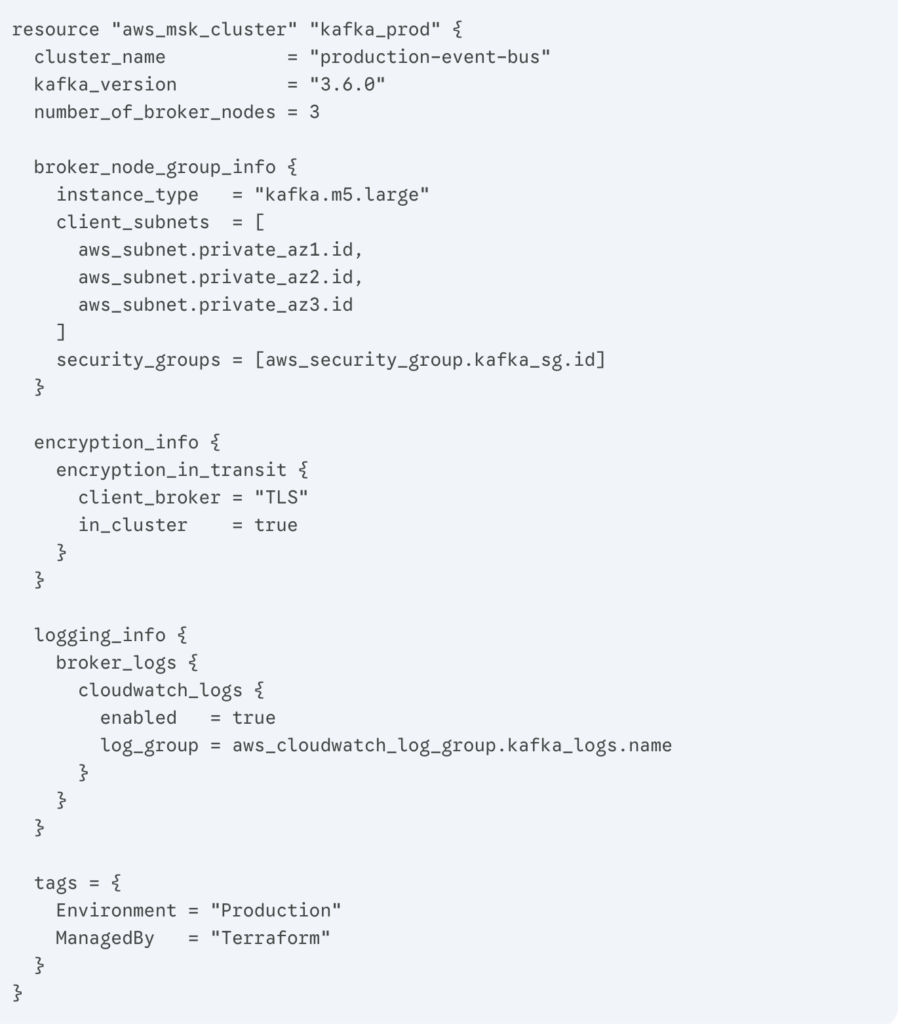

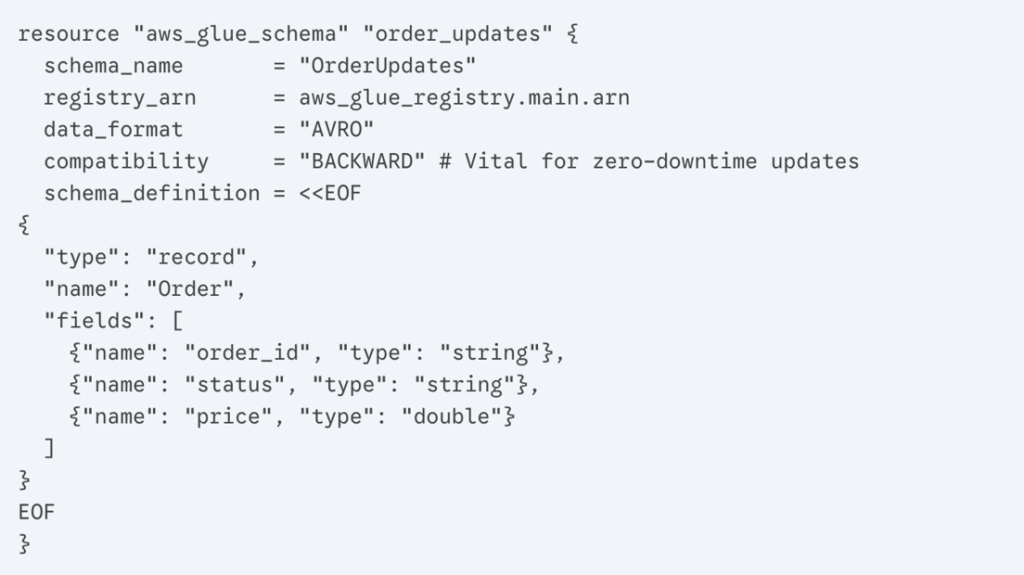

4. The Tech Lead’s Secret: Schema Management

Building the cluster is great, but as a Lead, you need to care about data integrity. If a Node.js producer changes a field name, your consumers will crash. We solve this by adding an AWS Glue Schema Registry directly in our Terraform code. This forces a contract between services.

5. Best Practices for Production

- Lifecycle Protection: Use prevent_destroy = true in your Terraform blocks for production clusters.

- Monitoring: Always enable CloudWatch logs (as shown in the code) to debug “Why did my consumer group stop?” at 3 AM.

- Variable-ize Everything: Never hardcode your VPC IDs. Use data sources or variables to make your code reusable.

Conclusion

Moving your Kafka infrastructure to Terraform isn’t just an “automation task”—it’s a fundamental shift toward a more mature, stable engineering culture. It allows your team to focus on building features while the code handles the heavy lifting of the infrastructure.